Metadata Leakage in Science: Costs, Causes, and Fixes.

Metadata leakage costs biomedical research billions annually. Learn how unreported experimental variables propagate and what teams can do to reduce it.

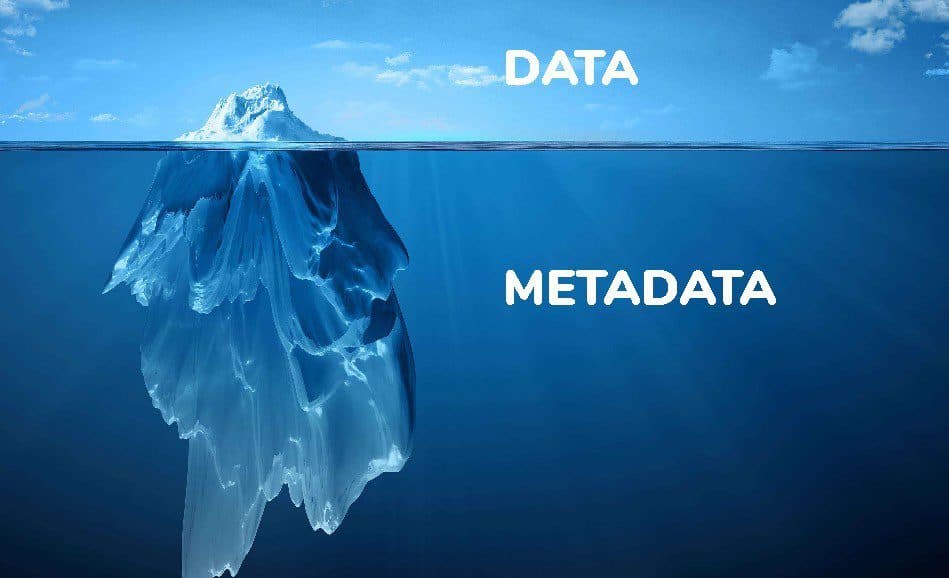

Scientific experiments all have variation in how they are performed. These small variations affect the possible conclusions that experimental data provide. When researchers attempt to compare data within domains and across domains, these experimental variations accumulate until the ability to conclude across experimental results becomes untenable. These variations I refer to as metadata leakage - small pieces of missing information in the experimental design. In fields like biology, this problem is core to data interpretability.

Take for example a biological assay measuring protein expression. Variables such as cell passage number, serum batch variations, incubation timing differences, and slight temperature fluctuations are rarely reported with precision in methods sections. Each unreported variable introduces a potential confounding factor. This is one reason why standardized quality control metrics for genomics data are essential - without consistent QC standards, metadata leakage compounds at every stage of analysis.

As these experiments are replicated across laboratories, the unknown variables multiply. Laboratory A might maintain cells at exactly 37.0°C, while Laboratory B's incubator fluctuates between 36.8-37.2°C. Neither lab reports these specifics, creating invisible variation in the resulting data. When meta-analyses attempt to synthesize findings from dozens or hundreds of studies, these hidden variables create interpretability drift.

These problems cascade and accumulate as one moves through the biological domains. Interpretability drift within genomics data compounds interpretability of transcriptomics to proteomics to pharmacodynamics and to clinical. For single-cell data, this drift is particularly acute - our overview of scRNA-seq processing metrics illustrates how quality control at the cell level can mitigate some of these cascading effects. At each transfer point, the uncertainty propagates and expands, often invisibly.

Historical Evolution and Scale

The nature and impact of metadata leakage have been transformed by the evolution of scientific practice. In the 1970s, scientific experiments were typically smaller in scale and conducted by individual researchers who personally managed each step. A typical biochemistry paper from this era might describe a handful of carefully controlled experiments, with methods sections that, while not comprehensive by today's standards, reflected the researcher's direct involvement in every procedural step.

Today’s high-throughput methods generate vast datasets but introduce much greater complexity. A single RNA sequencing experiment can generate data equivalent to hundreds of traditional Northern blots, but the experimental context becomes exponentially more complex. Automated liquid handling systems introduce batch effects across 96-well plates. Sequencing flow cells create position-dependent biases. Sample processing queues introduce temporal variations that affect thousands of measurements simultaneously. Each of these factors represents a potential source of metadata leakage, but documenting them requires systematic tracking that often exceeds the capacity of traditional methods sections.

A 1975 cell biology paper might report experiments on perhaps 10-50 individual samples, with each sample representing a discrete, manually controlled procedure. The researcher could reasonably remember and document the specific conditions for each experiment. In contrast, a contemporary genomics study might analyze 10,000 samples processed across multiple facilities over several months. The metadata required to fully document this experiment would include equipment serial numbers, technician identities, reagent lot numbers, processing timestamps, and environmental conditions for each step - a documentation burden that often proves practically impossible.

This scaling problem compounds as experiments become more collaborative and distributed. Multi-institutional studies, while scientifically powerful, create metadata capture challenges that simply did not exist in earlier eras. When samples are collected at one institution, processed at a second, and analyzed at a third, the opportunities for metadata loss multiply at each handoff point. Electronic data sharing enables rapid scientific progress but often strips away the contextual information that was implicitly preserved when researchers worked within single laboratories.

The automation revolution, while increasing experimental throughput and reducing human error, has paradoxically made many experimental conditions less visible to researchers. A scientist manually pipetting solutions in 1980 would directly observe temperature fluctuations, timing variations, and equipment quirks. Modern automated systems can mask these same variations behind apparently consistent procedures, creating metadata leakage that is both more systematic and more difficult to detect.

Perhaps most significantly, the pressure to generate large datasets has created a cultural shift where methodological precision is often sacrificed for experimental scale. The scientific reward structure increasingly favors papers with extensive data over those with exhaustive methodological documentation. This creates a feedback loop where metadata leakage becomes normalized and institutionalized within research practices.

Economic Impact

The economic consequences of metadata leakage represent one of science's most substantial but underrecognized inefficiencies. Conservative estimates suggest that irreproducibility costs the United States alone $28-50 billion annually in biomedical research, with metadata leakage contributing significantly to this figure through its role in creating non-replicable experimental conditions.

Pharmaceutical development provides the more direct quantification of these costs. The average drug development program requires approximately $2.6 billion over 10-15 years, with failure rates exceeding 90% for compounds entering clinical trials. While not all failures stem from metadata leakage, a substantial fraction can be attributed to inadequate experimental context transfer between preclinical and clinical phases. When promising preclinical results fail to translate due to uncontrolled methodological variations, entire development programs collapse. Even a 5% reduction in late-stage clinical failures due to improved metadata capture would save the pharmaceutical industry billions annually.

Academic research suffers parallel losses through computational resource waste. High-throughput genomics experiments that fail to account for batch effects or processing variations often require complete reanalysis or experimental repetition. Given that a single large-scale RNA sequencing study can cost $500,000-$2 million, and that metadata-related artifacts necessitate reanalysis in an estimated 20-30% of published studies, the cumulative computational waste likely exceeds hundreds of millions of dollars annually across major research institutions.

The indirect costs may be even more substantial. When researchers cannot reliably build upon previous work due to insufficient methodological documentation, scientific progress slows across entire fields. The opportunity cost of delayed discoveries, particularly in areas like cancer therapeutics or climate science, potentially dwarfs the direct experimental costs. A cancer treatment delayed by five years due to irreproducible foundational research represents not just scientific inefficiency but profound human cost.

Meta-analyses and systematic reviews, increasingly central to evidence-based decision making, often exclude substantial proportions of otherwise relevant studies due to inadequate methodological reporting. When a medical meta-analysis excludes 40-60% of potentially relevant studies due to insufficient experimental detail, the resulting clinical guidelines may be based on systematically biased subsets of available evidence. The downstream healthcare costs of suboptimal treatment protocols influenced by such biased evidence likely exceed billions of dollars annually.

Perhaps most significantly, metadata leakage creates cascading inefficiencies throughout research ecosystems. When foundational datasets cannot be properly integrated due to missing experimental context, every subsequent analysis built upon those datasets inherits and amplifies the uncertainty. In fields like systems biology, where researchers attempt to integrate genomics, proteomics, and metabolomics data, metadata leakage at any level can invalidate months or years of downstream computational work.

A conservative back-of-envelope calculation suggests that metadata leakage costs the global research enterprise at least $10-15 billion annually through direct experimental waste, computational inefficiency, and delayed translation of research findings. This figure excludes the opportunity costs of delayed discoveries and the societal impact of sub optimal evidence-based policies, which could easily double or triple these estimates.

Pharmaceutical development offers a clear example. A drug candidate might progress through preclinical testing based on data with significant underlying metadata variation. When the compound fails unexpectedly in clinical trials, researchers struggle to pinpoint where the disconnect occurred because the critical metadata was never captured.

Practical strategies for reducing metadata leakage

The scale of the problem can feel paralyzing, but concrete steps exist at every level of scientific practice.

For individual researchers

- Adopt minimum metadata standards for your domain. Standards like MIAME (microarray), MINSEQE (sequencing), and MIAPE (proteomics) define the baseline metadata that should accompany every dataset. If your domain lacks a standard, use ISA-Tab as a general framework.

- Document environmental conditions systematically. Record equipment serial numbers, reagent lot numbers, room temperature, and processing timestamps for every experiment - even when these are not required by your methods section.

- Use structured metadata templates, not free text. Free-text methods sections are the primary vector for metadata loss. Structured templates with required fields force completeness.

- Version-control your protocols. Tools like protocols.io allow researchers to publish, share, and version their experimental protocols with full metadata attached.

For laboratory managers and PIs

- Implement electronic lab notebooks (ELNs) with mandatory metadata fields. Paper notebooks cannot enforce completeness. ELNs with required fields prevent metadata omission at the point of data generation.

- Establish batch-tracking systems. Every reagent lot, equipment calibration, and environmental reading should be logged automatically or through structured entry.

- Conduct metadata audits before data sharing. Before depositing data in public repositories, audit the metadata against relevant domain standards. Flag gaps before they propagate.

For organizations managing large-scale data

- Build metadata validation into data pipelines. Automated quality checks should verify that incoming datasets meet minimum metadata requirements before ingestion into analytical workflows.

- Create cross-study metadata harmonization layers. When integrating data from multiple sources, standardize metadata fields, units, and ontologies at the integration layer rather than leaving reconciliation to downstream analysts. Organizations in regulated industries face even higher stakes - poorly tracked metadata can compromise regulatory submissions. Modern electronic quality management systems (eQMS) are designed specifically to enforce metadata completeness and audit traceability across the drug development lifecycle.

- Invest in provenance tracking. Every transformation applied to a dataset - normalization, filtering, batch correction - should be logged with full parameters so that downstream users can trace how data arrived at its current state. Platforms designed for regulatory compliance and document management embed these provenance-tracking capabilities natively, reducing the burden on individual researchers to maintain audit trails manually.

These steps do not eliminate metadata leakage, but they significantly reduce its propagation across studies and domains.

Closing thoughts

The gradual accumulation of minute experimental variations creates interpretability drift that slows scientific advancement across every domain. Addressing metadata leakage requires action on two fronts: technological infrastructure that captures experimental context automatically at the point of data generation, and a cultural shift within the scientific community toward treating metadata as a first-class element of data collection.

The practical strategies outlined above are not aspirational - they are implementable today. Minimum metadata standards exist for most major data types. Electronic lab notebooks and structured data platforms can enforce completeness. Automated validation pipelines can catch gaps before they propagate.

What remains is the institutional will to prioritize metadata quality alongside data quantity. As the volume of scientific data grows exponentially, the cost of ignoring this problem grows with it.

Exploring how to build metadata integrity into your data workflows? Learn how Unobio's Vault eQMS enforces data provenance and audit traceability across the drug development lifecycle.